How to Avoid Disaster and Save Lives

On April 26, 1986 a sudden explosion lit up the night at a local power plant. The operators were ill equipped and unprepared for the situation that they now had to contain. They spent the next few hours in confusion running around trying to get a grip on what had happened, as the surrounding population remained relatively unaware of the invisible danger that now surrounded them. It would take over a month to finally get things under control.

The name of this power plant was Chernobyl.

In all disasters there are often a multitude of factors leading up to the event. In the case of Chernobyl there were design errors with the plant and a number of ignored operating instructions, however, arguably the greatest fault ultimately did not lie with the operators, but with the designers. Chernobyl was a plant designed by physicists for physicists.

It was not a plant run by physicists.

If you are in any industry where human safety is a concern (such as healthcare, transportation, or construction) design and testing immediately become vital to not only the success of your business but also to the lives of others. Knowing who your users are is just as important as checking to make sure your calculations are correct.

Chernobyl is not a stand-alone incident.

Seven years earlier on a small island in Pennsylvania, there was another nuclear accident. Again, as at Chernobyl, correct operating instructions at the plant were ignored and there was a mechanical fault. These two factors alone were not enough to cause major damage though, reports suggest it was ultimately a misleading indicator light that escalated the severity of this accident. A valve that should have been closed was stuck open, but it took several hours and a new shift of operators to figure out what was wrong. An indicator light on the control panel was off, signifying to the operators that the valve was closed. What they didn’t know however was that this light indicated that power was going to the valve in order to close it, not that it was actually closed. The meaning of the various controls in the system were not as well understood by the operators of the plant as they were by the designers. (NUREG/CR 1250 Volume 1: Three Mile Island: A Report to the Commission and the Public, M. Rogovin et al., 1980)

Merely focusing on the tasks that users need to complete is only considering a small amount of the problem.

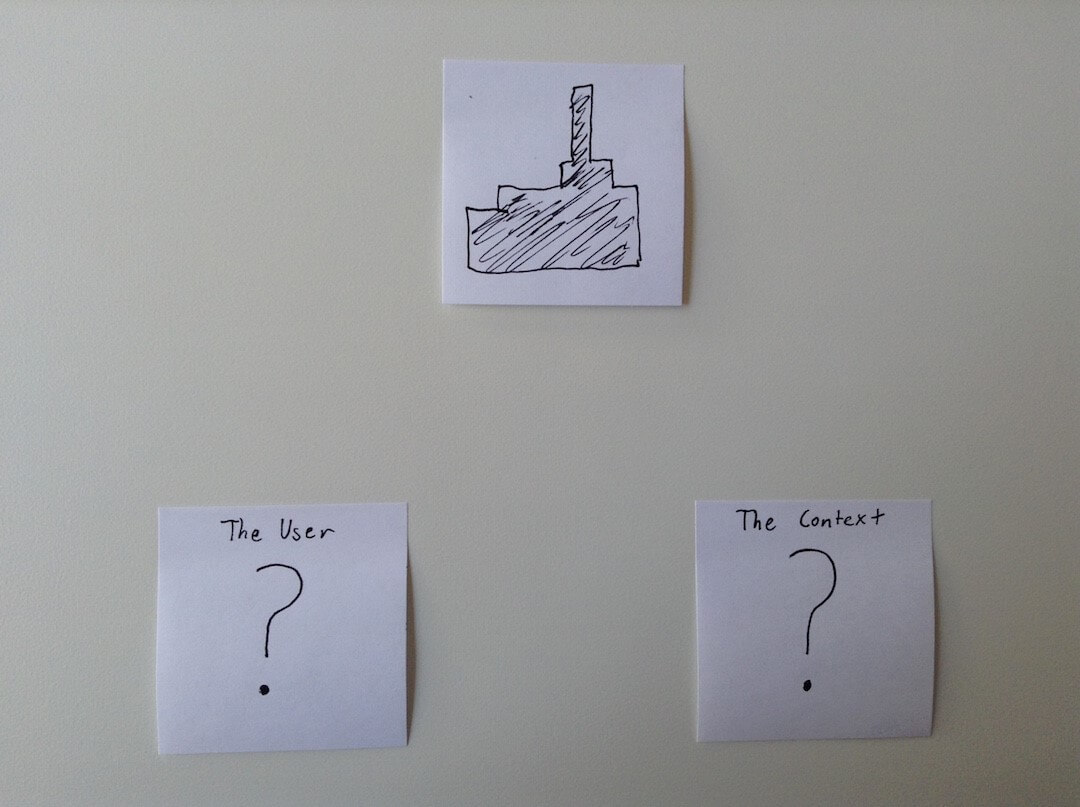

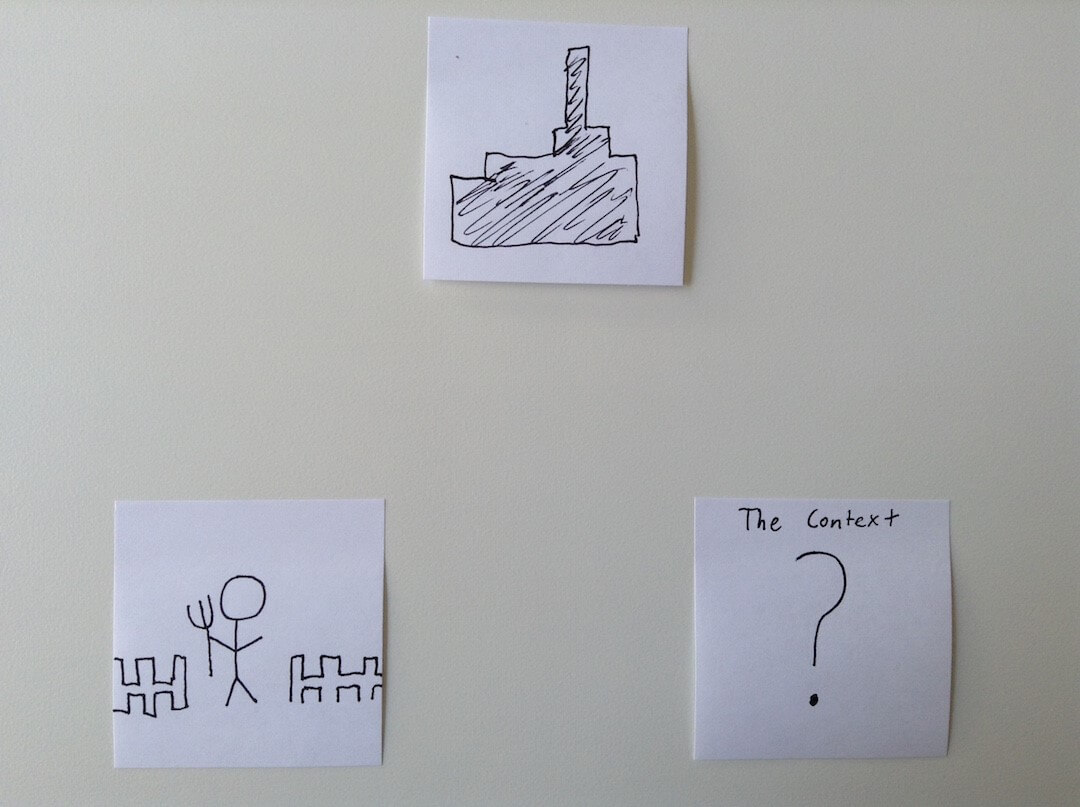

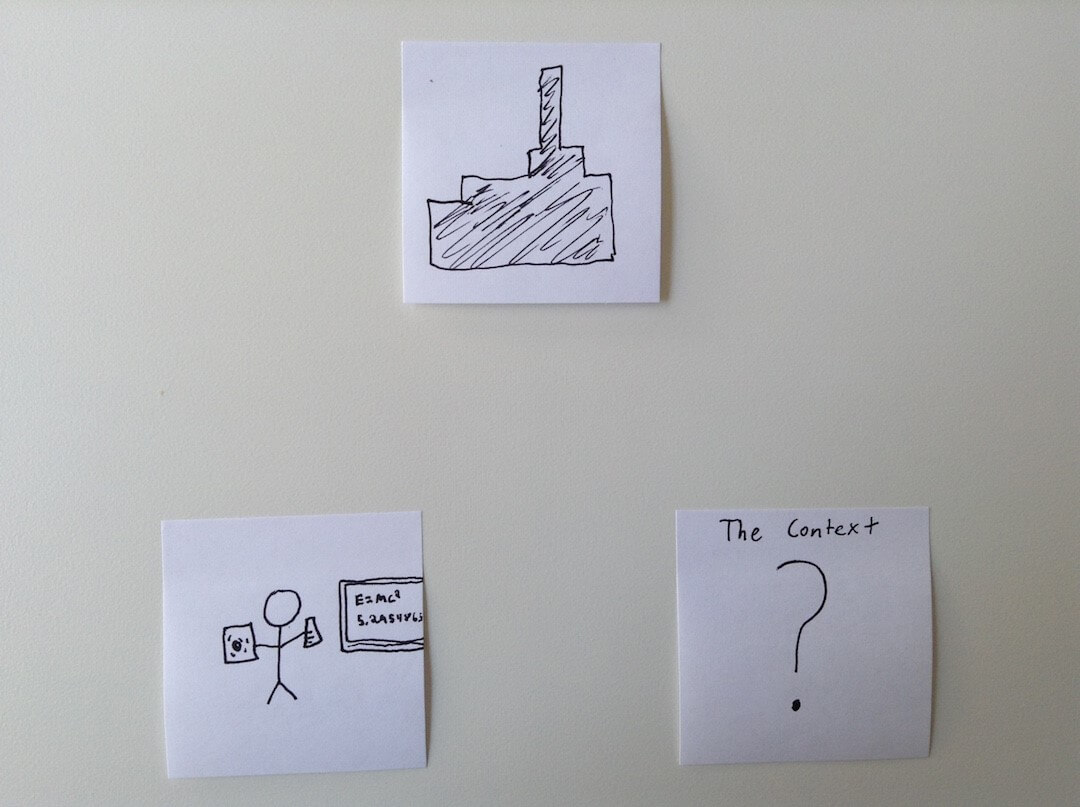

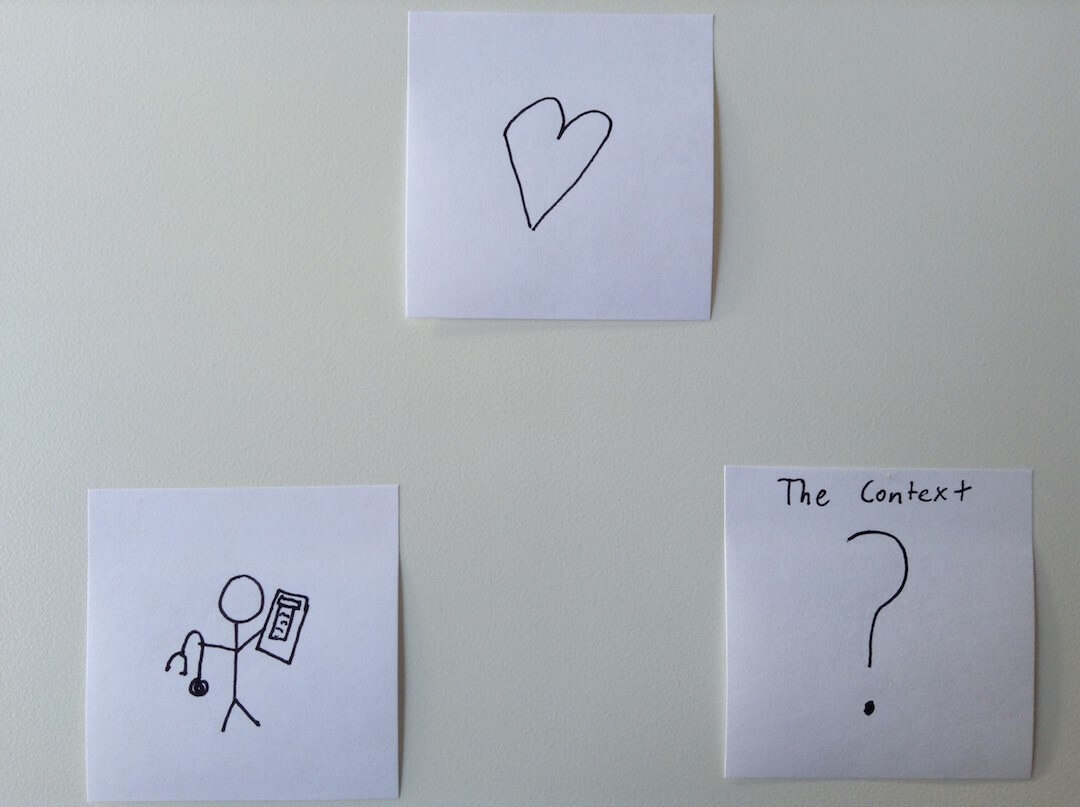

Bailey’s Human Performance Model states that there are three parts to be considered: the task, the user, and the context. If the designers are only looking at the task of running the power plant then they have an incomplete picture.

How one would design the system would change a lot depending on who the users are. If your user is Steven the wheat farmer, recently retrained as a power technician, then your system might look completely different than the one you might design for Ingrid the nuclear physicist.

Already, just adding one more factor is providing a more complete picture of what sort of system you should be designing. Just having two of the factors is still leaving a gaping hole in the picture though.

Besides not understanding who your users are, it’s also a problem when designers don’t take into account the environment in which your users are performing tasks.

When a heart rate monitor’s operation is considered outside of the hospital environment it seems to operate safely and correctly. However, these machines are not used in a controlled environment.

The same year as the accident at Chernobyl, there was another accident in a children’s hospital in Seattle. A young patient needed to be hooked up to a heart monitor so that her condition could be monitored as she slept. To the nurse who was hooking her up, this was nothing new; she had carefully taped the EKG electrodes to the girl’s chest and was now ready to plug the lead into the cord from the heart monitor machine. This time though was slightly different. This time another machine was near the heart monitor by the little girl’s bed, a portable intravenous pump.

When the nurse went to grab the cord from the heart monitor machine, she accidentally grabbed an almost identical cord from the intravenous pump. As this was a portable intravenous pump, it came with a detachable power cord for battery-powered operation. The nurse had no way of knowing that the cord she had just grabbed was a live electrical circuit. (Set Phasers on Stun, Steven Casy, 1998)

Just knowing the user’s task and the user was not enough to get a complete picture of the type of system that would need to be designed.

This accident could have been prevented if the other machines that could have been in the room were considered. If it was known that the portable intravenous machine might be there, than the heart monitor designers could produce a cord that would not only look different, but also be incapable of fitting into the intravenous power cord.

Without the context of this equipment and other distractions that are present in a hospital setting you cannot get a good idea of what your design considerations should be and therefore can’t avoid making mistakes with your design. And in safety systems, mistakes cost lives.

So before you build a system, figure out not only what the tasks are, but also who your users are, and the context in which they will be completing these tasks. And then test these systems in real environments and improve upon them. You might not be able to anticipate every problem that could occur, but if you catch even one potential problem then you have helped avert disaster.

Comments

Related Articles